|

10/27/2021 0 Comments Nvidia-Docker For Mac

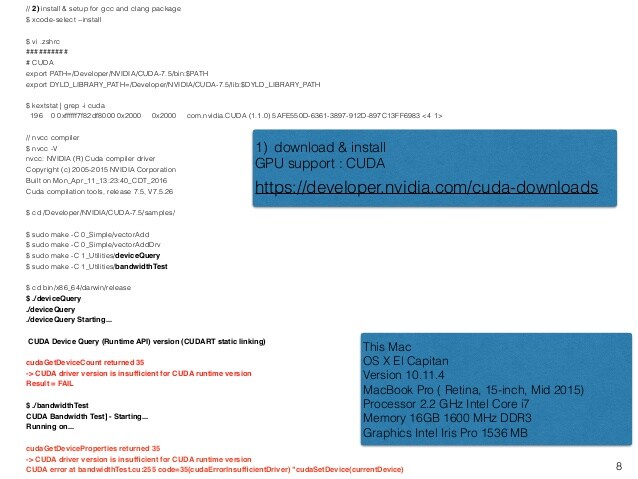

The configuration steps change based on your machine's operating system and the kind of NVIDIA GPU that your machine has. It simplifies the process of building and deploying containerized GPU-accelerated applications to desktop, cloud or data centers.Configuring the GPU on your machine can be immensely difficult. I installed only user space rocm libs from aur in a docker container on arch and it works fine with.In this post, we walk through the steps required to access your machine's GPU within a Docker container.NVIDIA Container Runtime is a GPU aware container runtime, compatible with the Open Containers Initiative (OCI) specification used by Docker, CRI-O, and other popular container technologies. Launching the previous command should return the following output:For Mac OS X, please see Setup GPU for Mac. Let's ensure everything work as expected, using a Docker image called nvidia-smi, which is a NVidia utility allowing to monitor (and manage) GPUs: docker run -runtimenvidia -rm nvidia/cuda nvidia-smi. I can use it with any Docker container.It is called the NVIDIA Container Toolkit! Nvidia Container Toolkit ( Citation) Potential Errors in DockerHowever nvidia-docker is still not supported on Windows and Mac. Luckily, you have found the solution explained here. Certain things like the CPU drivers are pre-configured for you, but the GPU is not configured when you run a docker container.The updated Docker subscription tiers deliver the productivity and collaboration developers rely on, paired with the security and trust businesses demand. Installation on HostDocker updates subscription model to deliver scale, speed, and security. The docker files have been developed for systems with a GPU supported by nvidia-docker.

Installing NVIDIA drivers from the command lineOnce you have worked through those steps, you will know you are successful by running the nvidia-smi command and viewing an output like the following. Installing NVIDIA drivers on Ubuntu guide NVIDIA's official toolkit documentation Here are some resources that you might find useful to configure the GPU on your base machine. The exact commands you will run will vary based on these parameters. As previously mentioned, this can be difficult given the plethora of distribution of operating systems, NVIDIA GPUs, and NVIDIA GPU drivers. Visual gba emulator macDocker image creation is a series of commands that configure the environment that our Docker container will be running in.The Brute Force Approach - The brute force approach is to include the same commands that you used to configure the GPU on your base machine. We do this in the image creation process. Next, Exposing the GPU Drivers to DockerIn order to get Docker to recognize the GPU, we need to make it aware of the GPU drivers. Second, if you decide to lift the docker image off of the current machine and onto a new one that has a different GPU, operating system, or you would like new drivers - you will have to re-code this step every time for each machine. Code credit to stack overflowThe Downsides of the Brute Force Approach - First of all, every time you rebuild the docker image you will have to reinstall the image, slowing down development. /Downloads/nvidia_installers /tmp/nvidia > Get the install files you used to install CUDA and the NVIDIA drivers on your hostRUN /tmp/nvidia/NVIDIA-Linux-x86_64-331.62.run -s -N -no-kernel-module > Install the driver.RUN rm -rf /tmp/selfgz7 > For some reason the driver installer left temp files when used during a docker build (i don't have any explanation why) and the CUDA installer will fail if there still there so we delete them.RUN /tmp/nvidia/cuda-linux64-rel-6.0.37-18176142.run -noprompt > CUDA driver installer.RUN /tmp/nvidia/cuda-samples-linux-6.0.37-18176142.run -noprompt -cudaprefix=/usr/local/cuda-6.0 > CUDA samples comment if you don't want them.RUN export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/cuda/lib64 > Add CUDA library into your PATHRUN touch /etc/ld.so.conf.d/cuda.conf > Update the ld.so.conf.d directoryRUN rm -rf /temp/* > Delete installer files. FROM ubuntu:14.04RUN apt-get update & apt-get install -y build-essentialADD. The brute force approach will look something like this in your Dockerfile.

Nvidia-Docker Full Python ApplicationThen, you should consider using the NVIDIA Container Toolkit alongside the base image that you currently have by using Docker multi-stage builds.The Power of the NVIDIA Container Toolkit - Now that you have you written your image to pass through the base machine's GPU drivers, you will be able to lift the image off the current machine and deploy it to containers running on any instance that you desire. Pretty cool!What if I need a different base image in my Dockerfile - Let's say you have been relying on a different base image in your Dockerfile. The layout of a fully built Dockerfile might look something like the following (where /app/ contains all of the python files): FROM nvidia/cuda:10.2-baseRUN apt-get update & apt-get install -no-install-recommends -no-install-suggests -y curlCOPY app/requirements_verbose.txt /app/requirements_verbose.txtRUN pip3 install -r /app/requirements_verbose.txt#copies the applicaiton from local path to container pathCMD A full python application using the NVIDIA Container ToolkitThe above Docker container trains and evaluates a deep learning model based on specifications using the base machines GPU. In my case, I use the NVIDIA Container Toolkit to power experimental deep learning frameworks. Success! Our docker container sees the GPU driversFrom this base state, you can develop your app accordingly. ConclusionCongratulations! Now you know how to expose GPU Drivers to your running Docker container using the NVIDIA Container Toolkit.

0 Comments

Leave a Reply. |

AuthorShad ArchivesCategories |

RSS Feed

RSS Feed